Why use a drone when you know your farm the best?

With boots on ground and hand-tending, your eyes are trained to notice every important detail.

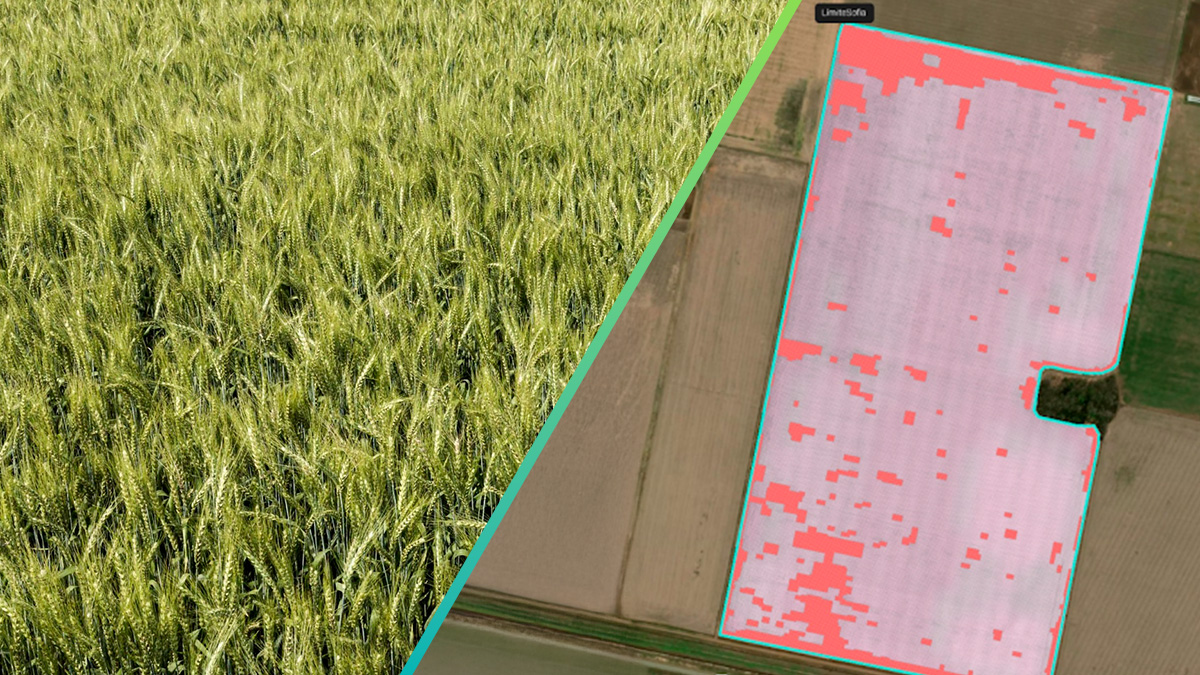

But what about the things that your eye isn’t trained to see, simply because our human eye can’t see certain wavelengths? And what if those wavelengths tell an invisible story about your farm health?

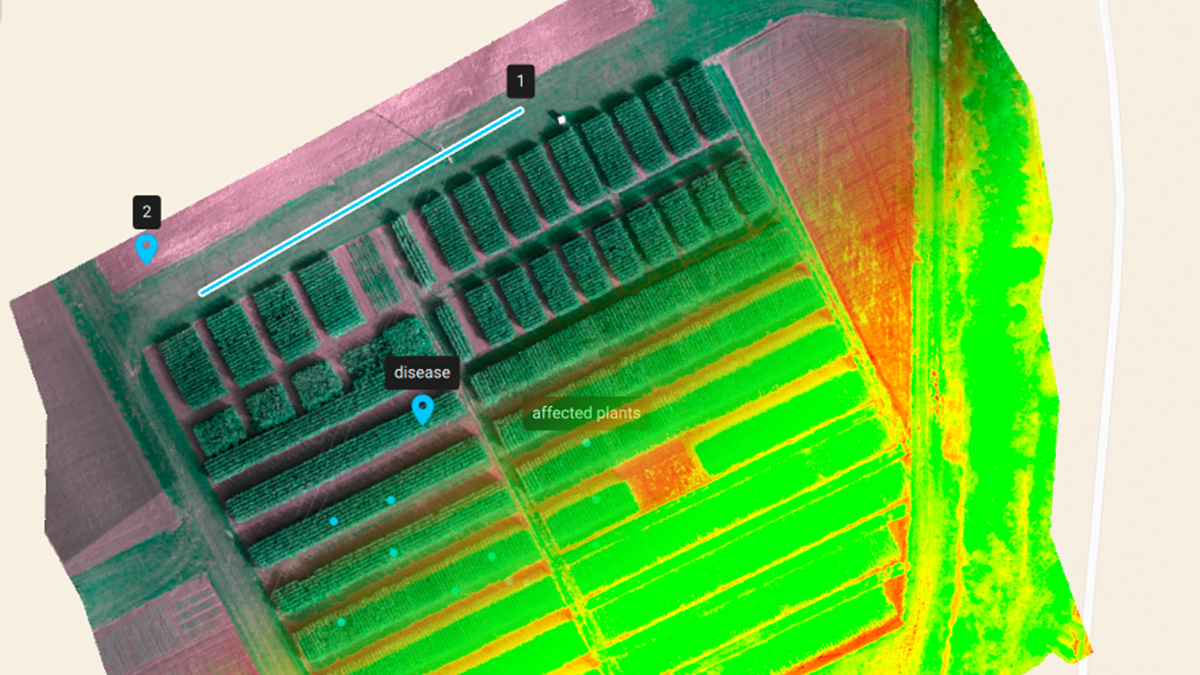

Plants reflect different amounts of red, green blue, red edge (RE) and near infra-red (NIR) light, that heavily depend on the state they are in, as well as their concentration and distribution of biochemical components. Drone-carried sensors, such as Sequoia or RedEdge M, are specifically designed to highlight the differences between healthy plants and distressed plants by using wavelengths the human eye cannot perceive.

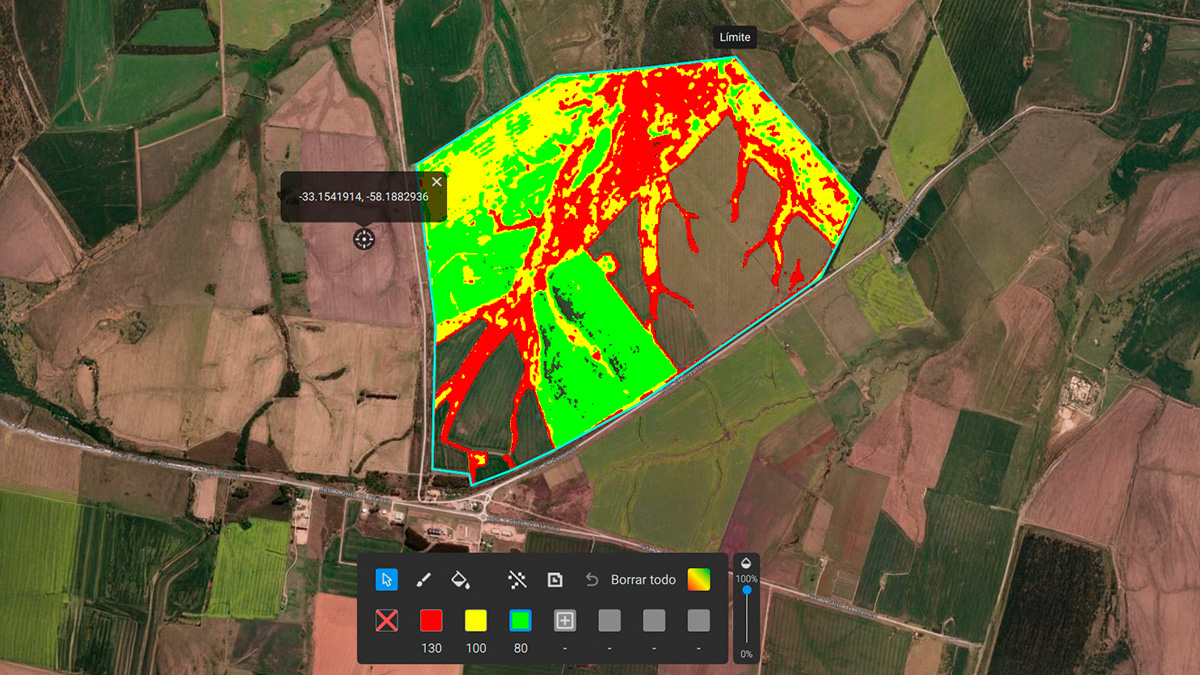

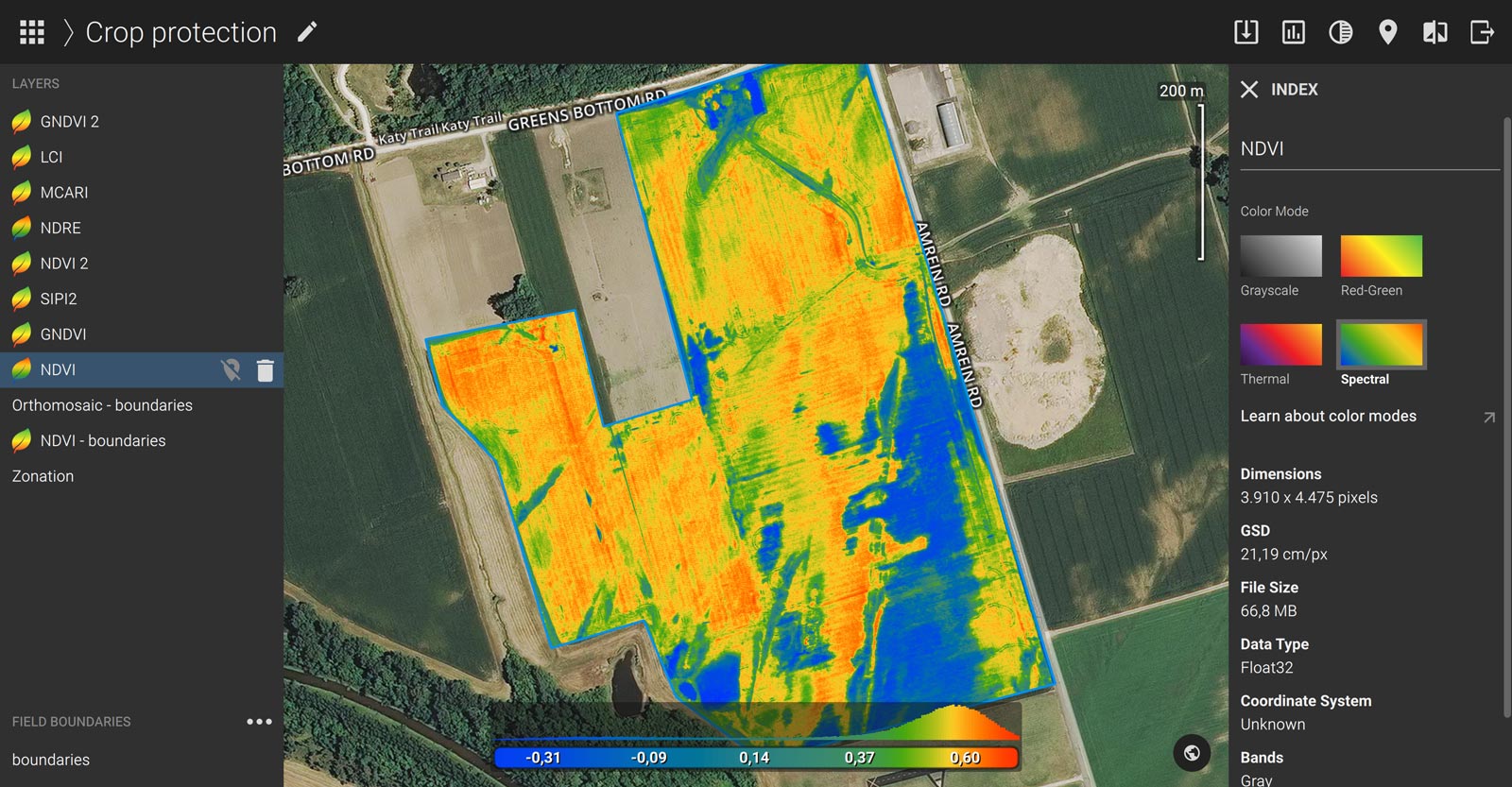

The multispectral images the sensor captures are used to create vegetation indices like the most commonly used NDVI (normalized difference vegetation index), NDRE, LAI, SAVI and more. Each index tells a different story about the source of a plant’s distress. In many cases, these indices become an essential layer of information in crop protection and crop production, but they are also used for insurance, general farm management and more.

Additionally, the term ‘less is more’ in agriculture is closely connected to drones and sensors. Knowing where to put ‘more’ or ‘less’ fertilizer, pesticide, herbicide…or even water, comes directly from Variable Rate Maps, derived from crop health and vegetation indices. This reduces cost, increases yield and improves the soil and crop quality overall. Saving money is always a plus, but saving while getting better yields and improving your farm health is priceless.

While hand-tending of small scale farms is manageable, it is about using your knowledge of the farm, and taking it to a next level by using tools that make the invisible, visible.